Researchers applied the “target trial emulation framework” to highlight important design considerations for observational studies that use real-world data to emulate randomized clinical trials.

Researchers applied the “target trial emulation framework” to highlight important design considerations for observational studies that use real-world data to emulate randomized clinical trials.

The work, which was supported by the NIH Pragmatic Trials Collaboratory’s Distributed Research Network and by a grant from the National Institute on Aging, was published this week in Alzheimer’s & Dementia: Translational Research & Clinical Interventions.

The US Food and Drug Administration has approved anti-amyloid beta monoclonal antibodies for the treatment of patients with early Alzheimer disease. However, the findings of the randomized trials that supported these approvals may have limited generalizability to clinical practice due to the trials’ strict eligibility criteria, limited treatment and follow-up periods, and close monitoring. Thus, little is known about the safety of anti-amyloid therapies in real-world settings.

Existing real-world data, such as information available in electronic health records and administrative claims databases, can support studies of safety and utilization outcomes. The target trial emulation framework can guide the design of such studies while minimizing the forms of bias commonly encountered in observational research.

Using the anti-amyloid therapy lecanemab as an example, the researchers described the key design and analytical considerations for observational studies intended to emulate randomized trials.

Authors of the article include Xiaojuan Li, Bahareh Rasouli, Jennifer Lyons, Noelle Cocoros, and Richard Platt of Harvard Medical School and the Harvard Pilgrim Health Care Institute; and Sonal Singh, Ivan Abi-Elias, and Jerry Gurwitz of UMass Chan Medical School.

Learn more about the NIH Collaboratory’s Distributed Research Network.

Researchers with PRIM-ER, an NIH Collaboratory Trial, published 2 innovative statistical techniques for evaluating intervention effects in stepped-wedge, cluster randomized trials. The new models, which use Bayesian methods, outperformed traditional analytic methods and other Bayesian approaches in simulations and real-world applications.

Researchers with PRIM-ER, an NIH Collaboratory Trial, published 2 innovative statistical techniques for evaluating intervention effects in stepped-wedge, cluster randomized trials. The new models, which use Bayesian methods, outperformed traditional analytic methods and other Bayesian approaches in simulations and real-world applications.

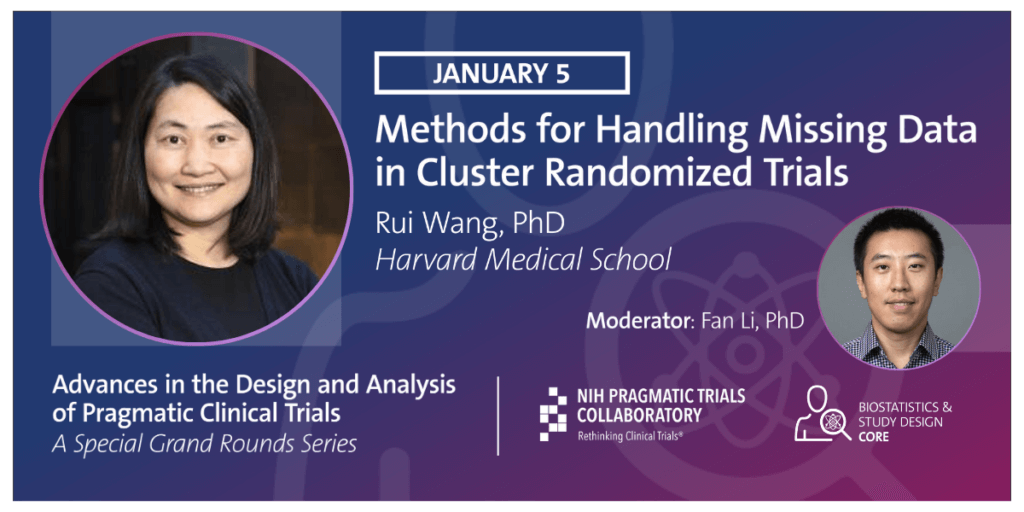

In this Friday's PCT Grand Rounds, Rui Wang of Harvard Medical School will offer the final session in our special series, Advances in the Design and Analysis of Pragmatic Clinical Trials, with

In this Friday's PCT Grand Rounds, Rui Wang of Harvard Medical School will offer the final session in our special series, Advances in the Design and Analysis of Pragmatic Clinical Trials, with  In this Friday’s PCT Grand Rounds, Jim Hughes of the University of Washington will continue our special series, Advances in the Design and Analysis of Pragmatic Clinical Trials, with his presentation,

In this Friday’s PCT Grand Rounds, Jim Hughes of the University of Washington will continue our special series, Advances in the Design and Analysis of Pragmatic Clinical Trials, with his presentation,  In this Friday’s PCT Grand Rounds, Brennan Kahan of University College London will present

In this Friday’s PCT Grand Rounds, Brennan Kahan of University College London will present  In a

In a