In PCTs, CDS can be integral to the conduct of studies by supporting the intervention or by serving as the intervention itself. Here, we will give some use cases of CDS and how they may support or be the focus of PCTs.

Recruitment

One obvious and practical use of CDS is in the use of recruitment for trials. Recruitment of subjects for trials can be very time-consuming and costly when done without CDS if a member of the research team must manually audit patient charts for inclusion and exclusion criteria, potentially several times a day depending on the research protocol. CDS can facilitate patient recruitment by automatically identifying patients with specific lab values, diagnoses, ages, genders, and other inclusion and exclusion criteria as specified by the protocol. A registry can perform this function, track patient flow for future reporting, and drive CDS logic based on registry inclusion to contact patients and delivery intervention components. A CDS tool may alert teams when a patient becomes trial eligible or indicates receptivity to participating. Building these CDS tools is feasible with the appropriate resources.

There are many ways to identify a list of potential participants for a trial. If the patients are to be approached or assessed for eligibility by their care provider, the CDS may need to be integrated with the workflow. A systematic review (Köpcke and Prokosch 2014) found workflow integration was a more important factor than the actual algorithm of the CDS tool. Aside from the data requirements, it is also important to consider how your recruitment tool will be used, and who the intended audience is. Does the research protocol involve acutely ill patients that arrive through the emergency department? If so, are the data available at the time they are needed? In an emergency, all of the data desired will not be available at the point of care through the EHR which may affect the desired functionality of the tool.

Supporting the Interventions

Many PCTs focus on the application of knowledge—often in the form of clinical practice guidelines—to practice. Some of these guidelines are complex and require multiple components to implement properly, including communications and shared data, information, and knowledge across care teams representing different disciplines and even organizations. Order sets, links to external information, and flowcharts may be created to facilitate information sharing. For instance, there could be a new protocol supporting pain management. To standardize the process, CDS could be used to ensure all clinicians prescribe the correct medications and interventions according to the protocol. In this case, the CDS is not being tested itself. Rather, it is simply supporting the research process by ensuring the intervention is administered as intended.

Case Example: PRIM-ER

The Primary Palliative Care for Emergency Medicine (PRIM-ER) trial is a pragmatic, cluster randomized trial designed to shift emergency care for seriously ill older adults away from treatment of acute illness and injury to goal-concordant palliative care in appropriate patients (Grudzen et al 2019). The intervention includes palliative care education, simulation-based workshops, CDS to identify patients, and audit and feedback (Grudzen et al 2019).

The CDS for PRIM-ER is an alert intended to help emergency department providers identify patients who could be candidates for palliative care, including those who already had consultations or contacts with social services, hospice, or palliative care experts, and patients that had terminal or critically serious conditions. The investigators and research staff initiated discussions with sites 6 to 7 months before implementation. At the first meetings, the investigators described the study and suggested CDS tools used at the host organizations. The meetings included clinical investigators and local IT staff who helped identify which CDS supportive tools would work best in that organization and how. Over the next 6 to 7 months, the investigators provided mapping documents for sites to use or adapt that included known local terms or ICD/CPT and other codes that mapped to concepts that are triggers used to identify patients for palliative care. Sites could use the information to check feasibility of local mapping and to decide what would be the most useful triggers and tools at the specific site. By engaging local experts in design, investigators hope to develop a CDS that not only complements local workflows and existing systems but will also be sustainable.

The customized nature of the CDS design and monitoring at each site required deliberate and thoughtful approaches by the research team. While investigators had guessed that the CDS would need to be flexible, they did expect that it could be more standardized across some sites that it was, particularly those that used the same EHR system. However, because the sites all have different populations, workflows, and policies, the CDS had to be customized at each place. For example, at the first site, the investigators developed an interruptive alert if a potentially eligible patient presented to the emergency department. At a different site, which did not allow interruptive alerts per organizational policy, the investigators used a passive banner on the patient record instead. They determined that the banner would be sufficient for the trial because it addressed the required function (ie, it helped make the emergency department provider aware of the patient condition and possible alternatives, including a palliative care consultation). By focusing on function, not form of the CDS, a variety of approaches were deployed across sites that were different in design but true to spirit of CDS.

Additionally, the study investigators intended for the CDS to not only be useful, but also sustainable. Therefore, the investigators encouraged every site to monitor their CDS, and although they did not prescribe the methods or the exact metrics for this, they did suggest monitoring it as part of their Plan, Do, Study, Act (PDSA cycles). Different sites had different outcome metrics due to the unique nature of each emergency department and health system. At some sites, the audit was based on the number of alerts, and at others, the audit was based on the number of referrals to social work, palliative care consults, hospice, etc.

Based on their experience with PRIM-ER, the investigators have provided the following success factors for CDS:

- Engage clinical and technical (EHR) experts at each site

- Allow a lot of time to develop the tools

- Focus on form not function

- Enable local adaptation of supportive CDS tools

- Continuously monitor at all sites but allow the evaluation to be customized

- Continuously engage with local stakeholders

CDS as the Intervention

In addition to serving as a supporting tool for complex interventions or other research activities, CDS tools themselves may be the interventions under evaluation in a PCT. In these cases, the CDS tool may include order sets (for specific medications or test/procedure orders), reminders, practice recommendations, clinical calculators, and others. If CDS is the primary mode of delivery for the intervention, it must be evaluated with the full rigor of the PCT. This evaluation includes the impact on patients and also the acceptability for clinicians—a requirement for pragmatic interventions so that they will be widely adopted into practice.

To measure the effectiveness of CDS, there must be a reliable way to measure patient outcomes; to be feasible, it needs to require little or no additional data collection (Richesson et al 2020). It is important to consider how outcome measures will be collected as part of the feasibility assessment of the CDS tool. Often, the research team has extra resources as a part of a research grant, and there is dedicated effort for data collection. This includes measures to determine if the target audience viewed the CDS, their actions (ordered a medication, discontinued an order, documented in a flow sheet, dismissed/ignored, etc), and the number of alerts. Asking end-users to rate the CDS as they see it in practice, collecting qualitative feedback about the CDS, or gathering data in a more structured format are commonly used approaches.

Regardless of the format, there must be ample evidence to support the use of the intervention as evidence-based practice, and it must be possible for others to monitor its success without the support of a research team (ie, pragmatic on the PRECIS scale). In other words, careful planning is required to ensure a feasible way to measure the impact and effectiveness of the CDS tool, both in terms of effort and the availability of a reliable data source. In addition, if these interventions are implemented in a PCT and found to be effective in achieving the desired outcome, then researchers need to think about and plan for how they can be disseminated and adopted by other organizations.

Case Example: EMBED

Buprenorphine (BUP) is an effective treatment option for patients with opioid use disorder, and although patients with opioid use disorder often present to the emergency department, BUP is rarely initiated as a part of routine emergency department care (Melnick et al 2019a). The Pragmatic Trial of User-Centered Clinical Decision Support to Implement Emergency Department-Initiated Buprenorphine for Opioid Use Disorder (EMBED) is designed to test the use of CDS as an intervention for improving the rates of initiation of BUP for patients with opioid use disorder who are treated in the emergency department (Ahmed et al 2019; Melnick et al 2019a; Melnick et al 2019b; Ray et al 2019).

This 18-month parallel, cluster-randomized trial will evaluate the intervention in 20 emergency departments in 5 healthcare systems nationally (Melnick et al 2019b).

Design

To design the CDS, the investigators elicited feedback from 26 emergency department physicians to determine the necessary elements, which were that the CDS "(1) identify patients appropriately, (2) avoid workflow disruptions, (3) streamline clerical burden, and (4) help users understand the treatment process" (Melnick et al 2019a; Melnick et al 2019b). The intervention also needed to be vendor agnostic and capable of integration within multiple healthcare systems, and because the CDS is the intervention being tested, it was important to be sure that it worked the same at each organization. While the EMBED trial required this approach, this may not be essential in other studies if there is a single vendor.

The challenges and solutions encountered by investigators are described in detail elsewhere (Melnick et al 2019a), and we highlight a few of them here:

- Challenge: Each health system in the trial uses a different EHR platform and/or different build of the same vendor’s product, and there was limited ability to customize the CDS available from the EHR vendor.

- Solution: The investigators created an EHR-integrated web application with a graphical user interface similar to the final design prototype. The web application "automates a care pathway that includes patient-specific orders (emergency department medications, prescriptions, and referral) and documentation (a note in the chart reflecting the use of the app and discharge instructions)" (Melnick et al 2019a).

- Challenge: In the healthcare system where the CDS was being built, initial design and implementation took approximately 6 months. When it was proposed for dissemination in the 4 other health systems in the trial network, the informatics leaders in these systems expressed reservations due to "(1) the resources required for local customization and maintenance of a nonstandards-based intervention and (2) the security limitations and potential loss of control of a centralized, nonstandards-based solution hosted outside of their system" (Melnick et al 2019a).

- Solutions: The investigators addressed organizational issues first, including adding resources to build CDS and address security concerns. They customized the alert for each site and tested the data for sensitivity and specificity. They also tested the tool at each site for user acceptability.

Case Study from NOHARM

The Nonpharmacologic Options in Postoperative Hospital-based and Rehabilitation Pain Management (NOHARM) pragmatic trial tests a suite of CDS tools embedded in the shared EHR among 4 semiautonomous healthcare systems. In addition to self-management educational materials and a portal-based conversation guide, the NOHARM intervention includes CDS tools to advance patient-centered, guideline concordant care. Pragmatic, EHR-based strategies have not yet been tested as a means of enhancing postoperative pain care, and aligning care with evidence-based standards. The NOHARM intervention seeks to make patients aware of the benefits and effective use of nonpharmacological pain modalities. Its goal is to encourage patients to combine nonpharmacologic care with medications and other approaches to managing their postoperative pain and thereby diminish reliance on opioids after surgery. Nonpharmacological pain care (NPPC) is both integral to current guidelines and consistently underutilized despite a robust evidence base.

Because peri-operative care spans diverse sites, providers, and workflows, EHR CDS offers a unique opportunity to strategically insert defaults and prompts at feasible points in the workflow to advance NPPC. CDS can be specified to trigger on “EHR events,” such order placement, entry of a severe pain score, registration for a clinic visit, etc. Although the frequency and timing of such events varies widely depending on the type and location of surgery, by leveraging CDS, the NOHARM intervention is able to introduce NPPC content at appropriate times that match a patient’s surgical type, setting (inpatient vs. outpatient), and phase of perioperative care.

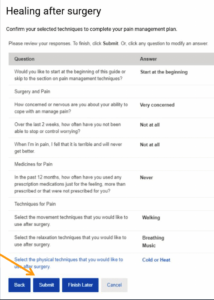

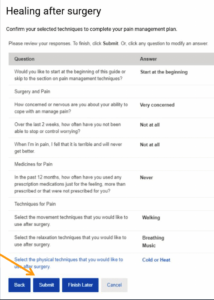

The NOHARM CDS tools inform care from the placement of the initial surgical order until 3 months after a patient’s surgery when they rotate off study. Surgical order placement triggers the sending of a portal-based conversation guide that educates patients about NPPC, and prompts them to select three modalities to include in their pain management plan. All NPPC options are validated for post-operative pain management and include movement (walking, yoga, and Tai Chi), relaxation (meditation, breathing, music, guided imagery, muscle relaxation, and aromatherapy), and physical (acupressure, massage, cold or heat, and TENS.)

The guide additionally queries patients about their pain-related anxiety, confidence, and medication use. The conversation guide is built on an EHR questionnaire base with embedded HTML and graphics to optimize user experience and engagement. Because the guide uses portal questionnaire functionality, patients’ NPPC selections and item responses are saved in the EHR and can be incorporated in logic and algorithms that drive CDS farther along the surgical care pathway. Patients must submit their questionnaire responses to save them, as shown in the Figure.

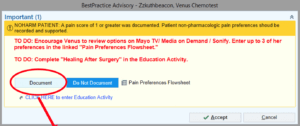

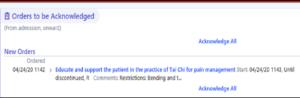

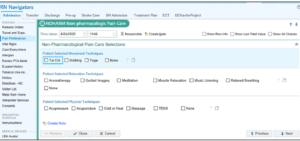

Patient information included in the NOHARM trial registry includes patients’ responses to the conversation guide items and clinical documentation during routine care; these are triangulated to individualize CDS to reflect patients characteristics, preferences, and level of interaction with the NOHARM intervention. The Epic EHR CDS is mapped to peri-operative workflows, and prompts nurses, physicians, and physical/occupational therapists to discuss and support the patient’s preferred nonpharmacologic options for pain management. For example, when a patient is admitted for surgery, if they have not yet entered NPPC preferences via the portal, the alert pictured below will prompt a nurse to solicit the patient’s NPPC preferences and enter these in EHR flowsheets.

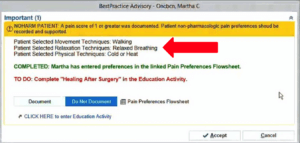

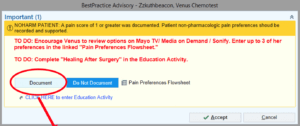

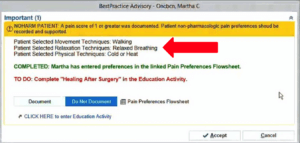

In contrast, if the patient has already made NPPC selections via their portal prior to surgery, the inpatient nurse will see this alert screen and be prompted to deliver pain management training, as feasible, and document what has been done.

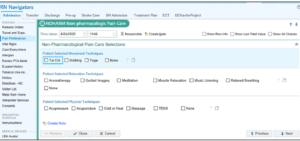

Nurses are presented with the dedicated NOHARM interface, below, to enter a patient’s NPPC preferences. The preferences will file directly to flowsheets and can be used to drive CDS.

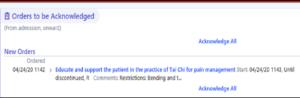

After a patient’s preferences have been documented, new orders will populate, directing providers along the patient’s perioperative care pathway to support the patient’s selected preferences.

As part of the NOHARM instervention, instructions were developed specifically for inpatient nurses, physical and occupational therapists, and post-anesthesia care unit or post-operative nurses, such that patients can be supported in their pain management preferences across the entire care continuum.

Dr. Andrea L. Cheville, Co-PI of NOHARM, provides an update on the project in this interview.

NOHARM has engaged more than 48,000 patients with a target of roughly 100,000 patients total. Dr. Cheville said the project has demonstrated that patients’ receptivity to different NPPC modalities is dynamic over the course of their perioperative journey. For example, patients who initially select heat and massage prior to surgery, may try and ultimately prefer aromatherapy or guided imagery. Nurses play a critical role in helping patients to identify the modalities that are likely to offer greatest benefit.

Intervention Outcomes

The use of CDS in different studies is varied and does not need to always provide prescriptive suggestions to support care processes. CDS supports a variety of outcomes, including those in the following categories (Bright et al 2012):

| Summary of CDS Outcome Categories |

|

| Category |

Example Measures |

| Clinical |

Morbidity/mortality, length of stay, quality of life, adverse events |

| Healthcare process |

Impact on user knowledge, adherence to guidelines/process |

| Healthcare provider workload, efficiency, and organization |

Clinician workload, efficiency, throughput, burnout |

| Relationship-centered |

Patient satisfaction |

| Economic |

Cost, resource utilization |

| Healthcare provider use and implementation |

User acceptance and satisfaction |

When developing the CDS tool as an intervention, it is important to consider from the beginning how it will be evaluated. By examining the categories above, it may be desirable to measure multiple outcomes, especially for research. Having positive outcomes in multiple categories gives stronger evidence to the effectiveness of the evaluation and will provide a stronger case for other institutions to adopt the intervention. For instance, if a tool is shown to have a positive effect on both clinical and economic outcomes, it is much more likely to be adopted. Regardless, all proposed outcome measures with operationalized definitions for data collection should be specified in the PCT research protocol. If other potential outcomes are discovered to be important after initiation of the trial, investigators should write an amendment to the protocol and begin data collection as soon as possible, especially if it is relatively early in the trial.

The feasibility of easily and reliably collecting the data needed to measure outcomes should be strongly considered. Once the data required are identified, will they be available? If so, can they be collected electronically/automatically, or will manual abstraction or measurement be required? Will data be available at the right time in the workflow to be useful? If it is not available, are there secondary proxy measures that may be available? If many of the data points must be collected manually, this poses great threat to the longevity of the tool. The overall "success" of a CDS tool, therefore, includes measures for both clinical effectiveness (ie, patient impact) as well as practical implementation (eg, acceptability, EHR integration).