Assessing Fitness for Use of Real-World Data Sources

Section 8

Operationalizing Fitness-for-Use Assessments

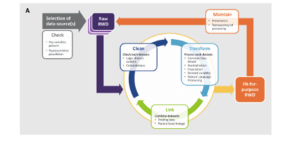

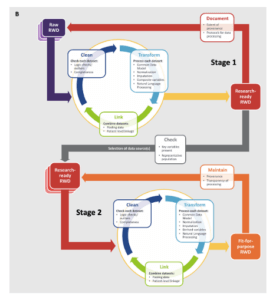

Best practices for operationalizing assessments of the fitness for use of real-world data are still evolving (Berger et al 2017; Daniel et al 2018; Mahendraratnam et al 2019). In general, approaches fall into 2 categories: single-stage and 2-stage. In a single-stage process, once a data source (or sources) has been selected, a cycle of cleaning, transformation, and linkage steps (if necessary) are applied to the "raw" real-world data, generating an output dataset that has been deemed fit for use. In a 2-stage process, the raw real-world data go through the cleaning, transformation, and linkage cycle to ensure they meet a set of predefined baseline quality characteristics. This baseline level of quality is described as "research ready," but an additional cycle of cleaning, transformation, and linkage may be required to determine that the dataset is fit for use for a given analysis. The Figure characterizes the process of making a real-world data set fit for purpose (Daniel et al 2018).

Figure. The Process of Making a Fit-for-Purpose Real-World Dataset

The decision to use a single-stage or 2-stage approach will depend on a number of factors. If a real-world data source is being used for a study or a standard with a well-defined purpose (eg, a device registry), it may be more efficient to use a single-stage process. If the data are to be used for a large number of to-be-defined studies with different objectives or are to be linked with many other sources, a 2-stage process may be more appropriate. Data within a given domain can meet basic levels of quality (eg, laboratory records are mapped to LOINC and have a specified result and unit of measure) with study-specific assessments to ensure that the results are suitable for the proposed analysis (eg, all patients in the proposed cohort have a result where one is expected, and the overall distribution of values is plausible). Study teams can focus on the factors that are most appropriate to their project and avoid most of the heavy lifting that occurs in initial transformation and cleaning steps.

Many DRNs use a 2-stage process with foundational data characterization routines that run against the CDMs of each partner and provide descriptive statistics such as summaries of missing values, outliers, and frequency distributions, as well as results from the network's data quality checks. The information from these routines is reviewed and those that successfully pass are able to respond to basic queries, with additional assessments issued prior to specific study-based analyses. This should be an iterative process, with characterization activities helping improve the level of data quality over time. In addition, the study-specific analyses would be expected to uncover certain quality issues, and if pervasive, some of these could be pushed down into the foundational process. This is especially true during the first studies that use a given dataset for research or the first time a data domain is harmonized or made available in a CDM. But in this manner, the gap between the foundational process and the study-specific assessments is narrowed, reducing the amount of work for each study team to complete their suitability assessments. The details of the data characterization processes of several DRNs was outlined in the NEST Data Quality Framework and is replicated in the Table

Table. Data Characterization Processes for Selected Distributed Research Networks

| Network | Collaborators | Approach to Data Characterization | |

| Healthcare Systems | Health Plans | ||

| HCSRN | X | X | Detailed checks look at ranges, cross-field agreement, implausible data patterns, and cross-site comparisons. Partners execute a data characterization package each time the data are refreshed. Results are returned to the HCSRN Coordinating Center. Potential quality issues are flagged and mitigated at the partner level (HCSRN). |

| Sentinel | X | X | Detailed checks look at ranges, cross-field agreement, implausible data patterns, and cross-site comparisons. Partners execute a data characterization package each time data are refreshed. Results are returned to the Sentinel Coordinating Center. Potential quality issues are flagged and mitigated at the partner level (Sentinel Initiative; Sentinel Operations Center 2017). |

| PCORnet | X | X | Includes a foundational data curation process, which establishes a baseline level of research readiness for all network partners to support preparatory-to-research queries, and a study-specific data curation process, which includes assessments of outcomes/variables or other derived concepts for the cohort under study (PCORnet; PCORnet Distributed Research Network Operations Center; Qualls et al 2018). |

| OHDSI | X | X | Optional. Each "datamart" can generate a standardized data profile that is viewable through a web-based tool (Achilles or Data Quality Dashboard). Institutions can choose whether to share these profiles or retain them locally (OHDSI). |

| ACT | X | Under development (Visweswaran et al 2018). | |

Curtis et al 2019. From the NEST Data Quality Framework. ACT = Accrual for Clinical Trials; HCSRN = Health Care Systems Research Network; OHDSI = Observational Health Data Sciences and Informatics; PCORnet = National Patient-Centered Clinical Research Network.

The National Evaluation System for health Technology Coordinating Center (NESTcc) Data Quality Framework, describes topics around the capture and use of real-world data—primarily from EHRs—for the postmarket evaluation of medical devices. Even if a project was not directly focused on devices, many of the steps are relevant for other types of research using real-world data and can serve as a foundation from which to develop or adopt processes for quality assessment. An additional defining feature of the NESTcc Data Quality Framework is that it also includes a Data Quality Maturity Model, which organizations can use to benchmark themselves, identify gaps and opportunities, and develop operational plans on how to progress through the different stages of organization maturity. Variations of this model have been proposed elsewhere (Callahan et al 2017). While these efforts are nascent, attestations about organizational maturity may be able to help satisfy the FDA's fitness-for-use requirements related to reliability, as they can address aspects of quality control (data assurance) and workflow (data accrual).

One issue with the generalizability of all data checks and fitness-for-use assessments thus far is that there is an underlying assumption that there has been a large-scale transformation of the source data that would support population-level quality analyses (eg, assessing missing values, identifying outliers, examining frequency distributions, etc.). This model is less well suited for sites that provide data for a specific trial or study, but otherwise do not keep their data in a "research ready" format. Quality assessments are more challenging when data are only provided for a few dozen study participants. Certain data checks may still be applicable, but others may need to be addressed through other means, such as site surveys. As the number of pragmatic trials continues to grow, we expect this to remain an active area of research.

Conclusion

Given that the data in most real-world data sources were not directly collected for the study in question, it is important to assess the suitability or fitness of a dataset before using it in an analysis. The FDA has provided general guidance on how to approach these tasks, but consensus-based best practices have yet to emerge. In the meantime, study teams that wish to perform their own assessments can consider looking to the procedures developed by DRNs as a source. Sharing the details around fitness-for-use assessments is not widespread, but we expect those efforts to increase as the use of real-world data sources increases.

SECTIONS

Resources

Calibrating Real-World Evidence Against RCT Evidence: Early Learnings from RCT-DUPLICATE Grand Rounds, February 26, 2021

Characterizing RWD Quality and Relevancy for Regulatory Purposes; Duke Margolis Center for Health Policy; 2020

A Comparison of Data Quality Assessment Checks in Six Data Sharing Networks; EGEMS (Wash DC). 2017;5(1):8.

REFERENCES

Berger ML, Sox H, Willke RJ, et al. 2017. Good Practices for Real-World Data Studies of Treatment and/or Comparative Effectiveness: Recommendations from the Joint ISPOR-ISPE Special Task Force on Real-World Evidence in Health Care Decision Making. Value Health. 20:1003-1008. doi:10.1016/j.jval.2017.08.3019. PMID: 28964430.

Curtis LH, Brown J, Laschinger J, et al. 2019. National Evaluation System for Health Technology Coordinating Center (NESTcc) Data Quality Framework. https://nestcc.org/data-quality-and-methods/. Accessed Aug 25, 2020.

Daniel G, Silcox C, Bryan J, McClellan M, Romine M, Frank K. Characterizing RWD Quality and Relevancy for Regulatory Purposes, 2018. https://www.semanticscholar.org/paper/Characterizing-RWD-Quality-and-Relevancy-for-Duke-Margolis/fd8731fcb5db8b78b8498e2b66102830c7350be5. Accessed Aug 25, 2020.

Mahendraratnam N, Silcox C, Mercon K, et al. Determining Real-World Data’s Fitness for Use and the Role of Reliability: Robert J. Margolis, MD, Center for Health Policy at Duke University, 2019. https://healthpolicy.duke.edu/publications/determining-real-world-datas-fitness-use-and-role-reliability. Accessed Aug 25, 2020.

Observational Health Data Sciences and Informatics. ACHILLES for data characterization. Available at: https://www.ohdsi.org/analytic-tools/achilles-for-data-characterization/. Accessed January 26, 2018.

PCORI. 2012. Standards in the Conduct of Registry Studies for Patient-Centered Outcomes Research.

PCORnet. PCORnet Common Data Model (CDM). Available at: https://pcornet.org/data-driven-common-model/. Accessed January 26, 2017.

PCORnet Distributed Research Network Operations Center. PCORnet Data Curation Query Package. Available at: https://github.com/PCORnet-DRN-OC/PCORnet-Data-Curation. Accessed January 26, 2018.

Qualls LG, Phillips TA, Hammill BG, et al. 2018. Evaluating Foundational Data Quality in the National Patient-Centered Clinical Research Network (PCORnet(R)). EGEMS (Wash DC). 6:3. doi:10.5334/egems.199. PMID: 29881761.

Sentinel Initiative. Distributed Database and Common Data Model. Available at: https://www.sentinelinitiative.org/sentinel/data/distributed-database-common-data-model. Accessed January 26, 2017.

Sentinel Operations Center. Sentinel Common Data Model - Data Quality Review and Characterization Process and Programs. Program Package version: 3.3.4, 2017. https://www.sentinelinitiative.org/about/how-sentinel-gets-its-data. Accessed Aug 25, 2020.

Visweswaran S, Becich MJ, D'Itri VS, et al. 2018. Accrual to Clinical Trials (ACT): A Clinical and Translational Science Award Consortium Network. JAMIA Open. 1:147-152. doi:10.1093/jamiaopen/ooy033. PMID: 30474072.